1.使用torchstat

pip install torchstat

from torchstat import stat

import torchvision.models as models

model = models.resnet152()

stat(model, (3, 224, 224))

关于stat函数的参数,第一个应该是模型,第二个则是输入尺寸,3为通道数。我没有调研该函数的详细参数,也不知道为什么使用的时候并不提示相应的参数。

2.使用torchsummary

pip install torchsummary

from torchsummary import summary

summary(model.cuda(),input_size=(3,32,32),batch_size=-1)

使用该函数直接对参数进行提示,可以发现直接有显式输入batch_size的地方,我自己的感觉好像该函数更好一些。但是!!!不知道为什么,该函数在我的机器上一直报错!!!

TypeError: can't convert CUDA tensor to numpy. Use Tensor.cpu() to copy the tensor to host memory first.

Update:经过论坛咨询,报错的原因找到了,只需要把

修改为

pip install torch-summary

补充:Pytorch查看模型参数并计算模型参数量与可训练参数量

查看模型参数(以AlexNet为例)

import torch

import torch.nn as nn

import torchvision

class AlexNet(nn.Module):

def __init__(self,num_classes=1000):

super(AlexNet,self).__init__()

self.feature_extraction = nn.Sequential(

nn.Conv2d(in_channels=3,out_channels=96,kernel_size=11,stride=4,padding=2,bias=False),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3,stride=2,padding=0),

nn.Conv2d(in_channels=96,out_channels=192,kernel_size=5,stride=1,padding=2,bias=False),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3,stride=2,padding=0),

nn.Conv2d(in_channels=192,out_channels=384,kernel_size=3,stride=1,padding=1,bias=False),

nn.ReLU(inplace=True),

nn.Conv2d(in_channels=384,out_channels=256,kernel_size=3,stride=1,padding=1,bias=False),

nn.ReLU(inplace=True),

nn.Conv2d(in_channels=256,out_channels=256,kernel_size=3,stride=1,padding=1,bias=False),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2, padding=0),

)

self.classifier = nn.Sequential(

nn.Dropout(p=0.5),

nn.Linear(in_features=256*6*6,out_features=4096),

nn.ReLU(inplace=True),

nn.Dropout(p=0.5),

nn.Linear(in_features=4096, out_features=4096),

nn.ReLU(inplace=True),

nn.Linear(in_features=4096, out_features=num_classes),

)

def forward(self,x):

x = self.feature_extraction(x)

x = x.view(x.size(0),256*6*6)

x = self.classifier(x)

return x

if __name__ =='__main__':

# model = torchvision.models.AlexNet()

model = AlexNet()

# 打印模型参数

#for param in model.parameters():

#print(param)

#打印模型名称与shape

for name,parameters in model.named_parameters():

print(name,':',parameters.size())

feature_extraction.0.weight : torch.Size([96, 3, 11, 11])

feature_extraction.3.weight : torch.Size([192, 96, 5, 5])

feature_extraction.6.weight : torch.Size([384, 192, 3, 3])

feature_extraction.8.weight : torch.Size([256, 384, 3, 3])

feature_extraction.10.weight : torch.Size([256, 256, 3, 3])

classifier.1.weight : torch.Size([4096, 9216])

classifier.1.bias : torch.Size([4096])

classifier.4.weight : torch.Size([4096, 4096])

classifier.4.bias : torch.Size([4096])

classifier.6.weight : torch.Size([1000, 4096])

classifier.6.bias : torch.Size([1000])

计算参数量与可训练参数量

def get_parameter_number(model):

total_num = sum(p.numel() for p in model.parameters())

trainable_num = sum(p.numel() for p in model.parameters() if p.requires_grad)

return {'Total': total_num, 'Trainable': trainable_num}

第三方工具

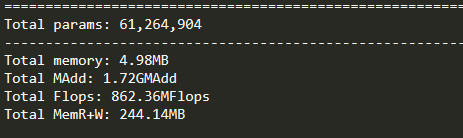

from torchstat import stat

import torchvision.models as models

model = models.alexnet()

stat(model, (3, 224, 224))

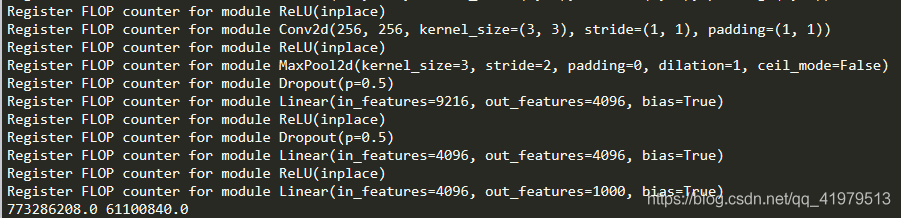

from torchvision.models import alexnet

import torch

from thop import profile

model = alexnet()

input = torch.randn(1, 3, 224, 224)

flops, params = profile(model, inputs=(input, ))

print(flops, params)

以上为个人经验,希望能给大家一个参考,也希望大家多多支持脚本之家。如有错误或未考虑完全的地方,望不吝赐教。

您可能感兴趣的文章:- pytorch固定BN层参数的操作

- pytorch 如何自定义卷积核权值参数

- pytorch交叉熵损失函数的weight参数的使用

- Pytorch 统计模型参数量的操作 param.numel()

- pytorch 一行代码查看网络参数总量的实现

- pytorch 优化器(optim)不同参数组,不同学习率设置的操作

- pytorch LayerNorm参数的用法及计算过程

咨 询 客 服

咨 询 客 服