| 虚拟机 | IP | 说明 |

|---|---|---|

| Keepalived+Nginx1[Master] | 192.168.43.101 | Nginx Server 01 |

| Keeepalived+Nginx[Backup] | 192.168.43.102 | Nginx Server 02 |

| Tomcat01 | 192.168.43.103 | Tomcat Web Server01 |

| Tomcat02 | 192.168.43.104 | Tomcat Web Server02 |

| VIP | 192.168.43.150 | 虚拟漂移IP |

1.更改Tomcat默认欢迎页面,用于标识切换Web

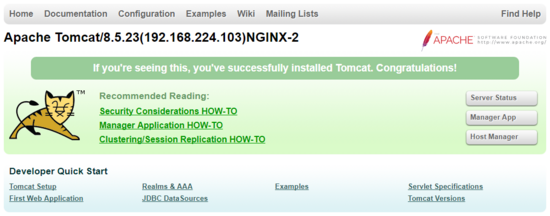

更改TomcatServer01 节点ROOT/index.jsp 信息,加入TomcatIP地址,并加入Nginx值,即修改节点192.168.43.103信息如下:

<div id="asf-box">

<h1>${pageContext.servletContext.serverInfo}(192.168.224.103)<%=request.getHeader("X-NGINX")%></h1>

</div>

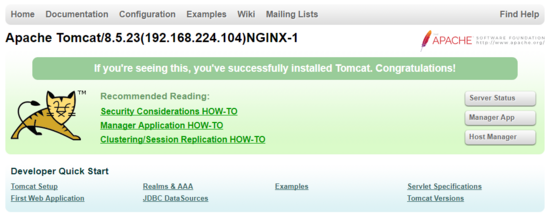

更改TomcatServer02 节点ROOT/index.jsp信息,加入TomcatIP地址,并加入Nginx值,即修改节点192.168.43.104信息如下:

<div id="asf-box">

<h1>${pageContext.servletContext.serverInfo}(192.168.224.104)<%=request.getHeader("X-NGINX")%></h1>

</div>

2.启动Tomcat服务,查看Tomcat服务IP信息,此时Nginx未启动,因此request-header没有Nginx信息。

3.配置Nginx代理信息

1.配置Master节点[192.168.43.101]代理信息

upstream tomcat {

server 192.168.43.103:8080 weight=1;

server 192.168.43.104:8080 weight=1;

}

server{

location / {

proxy_pass http://tomcat;

proxy_set_header X-NGINX "NGINX-1";

}

#......其他省略

}

2.配置Backup节点[192.168.43.102]代理信息

upstream tomcat {

server 192.168.43.103:8080 weight=1;

server 192.168.43.104:8080 weight=1;

}

server{

location / {

proxy_pass http://tomcat;

proxy_set_header X-NGINX "NGINX-2";

}

#......其他省略

}

3.启动Master 节点Nginx服务

[root@localhost init.d]# service nginx start Starting nginx (via systemctl): [ 确定 ]

此时访问 192.168.43.101 可以看到103和104节点Tcomat交替显示,说明Nginx服务已经将请求负载到了2台tomcat上。

4.同理配置Backup[192.168.43.102] Nginx信息,启动Nginx后,访问192.168.43.102后可以看到Backup节点已起到负载的效果。

4.配置Keepalived 脚本信息

1. 在Master节点和Slave节点 /etc/keepalived目录下添加check_nginx.sh 文件,用于检测Nginx的存货状况,添加keepalived.conf文件

check_nginx.sh文件信息如下:

#!/bin/bash

#时间变量,用于记录日志

d=`date --date today +%Y%m%d_%H:%M:%S`

#计算nginx进程数量

n=`ps -C nginx --no-heading|wc -l`

#如果进程为0,则启动nginx,并且再次检测nginx进程数量,

#如果还为0,说明nginx无法启动,此时需要关闭keepalived

if [ $n -eq "0" ]; then

/etc/rc.d/init.d/nginx start

n2=`ps -C nginx --no-heading|wc -l`

if [ $n2 -eq "0" ]; then

echo "$d nginx down,keepalived will stop" >> /var/log/check_ng.log

systemctl stop keepalived

fi

fi

添加完成后,为check_nginx.sh 文件授权,便于脚本获得执行权限。

[root@localhost keepalived]# chmod -R 777 /etc/keepalived/check_nginx.sh

2.在Master 节点 /etc/keepalived目录下,添加keepalived.conf 文件,具体信息如下:

vrrp_script chk_nginx {

script "/etc/keepalived/check_nginx.sh" //检测nginx进程的脚本

interval 2

weight -20

}

global_defs {

notification_email {

//可以添加邮件提醒

}

}

vrrp_instance VI_1 {

state MASTER #标示状态为MASTER 备份机为BACKUP

interface ens33 #设置实例绑定的网卡(ip addr查看,需要根据个人网卡绑定)

virtual_router_id 51 #同一实例下virtual_router_id必须相同

mcast_src_ip 192.168.43.101

priority 250 #MASTER权重要高于BACKUP 比如BACKUP为240

advert_int 1 #MASTER与BACKUP负载均衡器之间同步检查的时间间隔,单位是秒

nopreempt #非抢占模式

authentication { #设置认证

auth_type PASS #主从服务器验证方式

auth_pass 123456

}

track_script {

check_nginx

}

virtual_ipaddress { #设置vip

192.168.43.150 #可以多个虚拟IP,换行即可

}

}

3.在Backup节点 etc/keepalived目录下添加 keepalived.conf 配置文件

信息如下:

vrrp_script chk_nginx {

script "/etc/keepalived/check_nginx.sh" //检测nginx进程的脚本

interval 2

weight -20

}

global_defs {

notification_email {

//可以添加邮件提醒

}

}

vrrp_instance VI_1 {

state BACKUP #标示状态为MASTER 备份机为BACKUP

interface ens33 #设置实例绑定的网卡(ip addr查看)

virtual_router_id 51 #同一实例下virtual_router_id必须相同

mcast_src_ip 192.168.43.102

priority 240 #MASTER权重要高于BACKUP 比如BACKUP为240

advert_int 1 #MASTER与BACKUP负载均衡器之间同步检查的时间间隔,单位是秒

nopreempt #非抢占模式

authentication { #设置认证

auth_type PASS #主从服务器验证方式

auth_pass 123456

}

track_script {

check_nginx

}

virtual_ipaddress { #设置vip

192.168.43.150 #可以多个虚拟IP,换行即可

}

}

Tips: 关于配置信息的几点说明

5.集群高可用(HA)验证

Step1 启动Master机器的Keepalived和 Nginx服务

[root@localhost keepalived]# keepalived -D -f /etc/keepalived/keepalived.conf [root@localhost keepalived]# service nginx start

查看服务启动进程

[root@localhost keepalived]# ps -aux|grep nginx root 6390 0.0 0.0 20484 612 ? Ss 19:13 0:00 nginx: master process /usr/local/nginx/sbin/nginx -c /usr/local/nginx/conf/nginx.conf nobody 6392 0.0 0.0 23008 1628 ? S 19:13 0:00 nginx: worker process root 6978 0.0 0.0 112672 968 pts/0 S+ 20:08 0:00 grep --color=auto nginx

查看Keepalived启动进程

[root@localhost keepalived]# ps -aux|grep keepalived root 6402 0.0 0.0 45920 1016 ? Ss 19:13 0:00 keepalived -D -f /etc/keepalived/keepalived.conf root 6403 0.0 0.0 48044 1468 ? S 19:13 0:00 keepalived -D -f /etc/keepalived/keepalived.conf root 6404 0.0 0.0 50128 1780 ? S 19:13 0:00 keepalived -D -f /etc/keepalived/keepalived.conf root 7004 0.0 0.0 112672 976 pts/0 S+ 20:10 0:00 grep --color=auto keepalived

使用 ip add 查看虚拟IP绑定情况,如出现192.168.43.150 节点信息则绑定到Master节点

[root@localhost keepalived]# ip add 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1 link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo valid_lft forever preferred_lft forever inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000 link/ether 00:0c:29:91:bf:59 brd ff:ff:ff:ff:ff:ff inet 192.168.43.101/24 brd 192.168.43.255 scope global ens33 valid_lft forever preferred_lft forever inet 192.168.43.150/32 scope global ens33 valid_lft forever preferred_lft forever inet6 fe80::9abb:4544:f6db:8255/64 scope link valid_lft forever preferred_lft forever inet6 fe80::b0b3:d0ca:7382:2779/64 scope link tentative dadfailed valid_lft forever preferred_lft forever inet6 fe80::314f:5fe7:4e4b:64ed/64 scope link tentative dadfailed valid_lft forever preferred_lft forever 3: virbr0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN qlen 1000 link/ether 52:54:00:2b:74:aa brd ff:ff:ff:ff:ff:ff inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0 valid_lft forever preferred_lft forever 4: virbr0-nic: <BROADCAST,MULTICAST> mtu 1500 qdisc pfifo_fast master virbr0 state DOWN qlen 1000 link/ether 52:54:00:2b:74:aa brd ff:ff:ff:ff:ff:ff

Step 2 启动Backup节点Nginx服务和Keepalived服务,查看服务启动情况,如Backup节点出现了虚拟IP,则Keepalvied配置文件有问题,此情况称为脑裂。

[root@localhost keepalived]# clear [root@localhost keepalived]# ip add 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1 link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo valid_lft forever preferred_lft forever inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000 link/ether 00:0c:29:14:df:79 brd ff:ff:ff:ff:ff:ff inet 192.168.43.102/24 brd 192.168.43.255 scope global ens33 valid_lft forever preferred_lft forever inet6 fe80::314f:5fe7:4e4b:64ed/64 scope link valid_lft forever preferred_lft forever 3: virbr0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN qlen 1000 link/ether 52:54:00:2b:74:aa brd ff:ff:ff:ff:ff:ff inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0 valid_lft forever preferred_lft forever 4: virbr0-nic: <BROADCAST,MULTICAST> mtu 1500 qdisc pfifo_fast master virbr0 state DOWN qlen 1000 link/ether 52:54:00:2b:74:aa brd ff:ff:ff:ff:ff:ff

Step 3 验证服务

浏览并多次强制刷新地址: http://192.168.43.150 ,可以看到103和104多次交替显示,并显示Nginx-1,则表明 Master节点在进行web服务转发。

Step 4 关闭Master keepalived服务和Nginx服务,访问Web服务观察服务转移情况

[root@localhost keepalived]# killall keepalived [root@localhost keepalived]# service nginx stop

此时强制刷新192.168.43.150发现 页面交替显示103和104并显示Nginx-2 ,VIP已转移到192.168.43.102上,已证明服务自动切换到备份节点上。

Step 5 启动Master Keepalived 服务和Nginx服务

此时再次验证发现,VIP已被Master重新夺回,并页面交替显示 103和104,此时显示Nginx-1

四、Keepalived抢占模式和非抢占模式

keepalived的HA分为抢占模式和非抢占模式,抢占模式即MASTER从故障中恢复后,会将VIP从BACKUP节点中抢占过来。非抢占模式即MASTER恢复后不抢占BACKUP升级为MASTER后的VIP。

非抢占模式配置:

1> 在vrrp_instance块下两个节点各增加了nopreempt指令,表示不争抢vip

2> 节点的state都为BACKUP 两个keepalived节点都启动后,默认都是BACKUP状态,双方在发送组播信息后,会根据优先级来选举一个MASTER出来。由于两者都配置了nopreempt,所以MASTER从故障中恢复后,不会抢占vip。这样会避免VIP切换可能造成的服务延迟。

以上就是本文的全部内容,希望对大家的学习有所帮助,也希望大家多多支持脚本之家。

咨 询 客 服

咨 询 客 服